Neuroscientists are working at the cutting edge of technology and brain science to develop new ways for people with vision disabilities to navigate the world around them.

At the annual meeting of the Cognitive Neuroscience Society (CNS), researchers are presenting new techniques for integrating digital haptics and sound technology to transform vision rehabilitation for both children and adults alike.

“Vision rehabilitation requires bridging fundamental research, modelling and neuroimaging methods,” said Benedetta Franceschiello of the University of Lausanne, who is chairing the symposium on vision rehabilitation at CNS 2021 virtual. The new wave of devices to help the vision impaired combine these areas to deliver more personalized, democratized technological solutions.

“We have cutting-edge analysis techniques, the computational power of supercomputers, the ability to record data to a level of detail that was not possible before, and the ability to create increasingly sophisticated and portable rehabilitation devices,” she says.

At the same time, neuroscientists have great insight into the brain’s plasticity and how the brain integrates information from multiple senses. “This field is developing at a fast rate, which is vital,” said Ruxandra Tivadar of the University of Bern, “as having reduced or a lacking sensory function is an extremely grave impairment that impacts everyday function and thus the quality of life.”

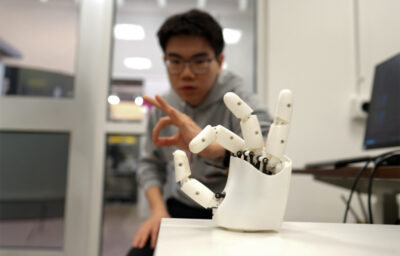

Tivadar is presenting new research showing how feedback from digital haptics – using the sense of touch coupled with motion – enables the visually impaired to easily learn about new objects and spaces. It’s just one way researchers are leveraging all the human senses to develop new technology for vision rehabilitation.

Teaching a person with vision disability how to navigate a new space is a tedious process, Tivadar says. “We need occupational therapists, we need to make tactile maps with different textures and, especially, for different places, and then we have to actually train the individual on tactile exploration before training them on the street,” she explains. “Imagine future mobile phones with digital haptic feedback that allow the visually impaired to learn about new spaces instantly.”

That is the future Tivadar is working toward, and with new results being presented at CNS, she and colleagues are showing it is possible, and, soon. In new unpublished research, Tivadar’s team used digital haptics to represent the layout of an apartment in 2-D. Sighted participants who were blindfolded learned the layout by exploring a digital haptic rendering of the layout. They then had to actually navigate and complete tasks in the real, physical space that was previously unknown to them.

“Our data show that people learn these layouts well after only 45 minutes of training,” Tivadar says. “In addition, we also see that participants have absolutely no problem in learning easy trajectories in this layout; all of our participants succeed at this task, whether previously trained or not.”

The data also suggest that those participants who trained for harder navigation succeeded better than participants who were only trained on easier trajectories. “These findings imply that for really simple layouts, people need minimal training to succeed at imagining a space in their minds and then physically navigating it,” Tivadar says.

Advances in big data analysis helped make this work possible. The researchers filmed the hands of 25 participants while they were exploring a haptic tablet for 8 to 10 minutes at a time. Tivadar’s team then used a deep neural network to analyze the roughly 200 videos, using an algorithm that learns to track the movement of a single finger of each participant. “This enabled us to understand better how participants interact with haptics and to help further develop applications of this technology,” Tivadar says. “Imagine if we can ‘see’ objects and spaces using one finger, what we could do by using all 10 of our fingers, or our whole palm to feel.”

“We are able to do better visual rehabilitation when incorporating new technologies and using the brain’s properties, such as multisensory integration and cross-modal plasticity. We need to look further into how our senses can complement or supplement each other in order to find low-cost solutions to vision rehabilitation.” said Tivadar.